Last updated at Mon, 06 Nov 2017 21:22:35 GMT

If you look at any OO-based codebase of a nontrivial size, you’ll [hopefully] find well understood behavior formalized and encapsulated through the effective use of polymorphism- either via interfaces which decouple calling code from a types’ implementation, or via sub typing to share code common to multiple types.

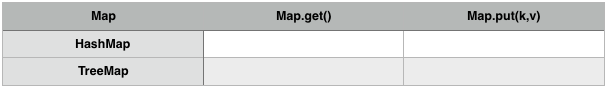

To take an example from a statically typed language like Java, let’s look at the Map interface and a few of its implementations in the standard library:

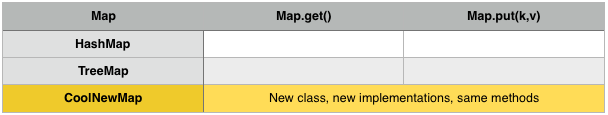

A receiving method which takes type Map doesn’t have to concern itself with the different implementation details of a HashMap or TreeMap; it’s enough to rely on the fact that both concrete types support the get() and put(k, v) methods. If we subsequently want to use a new Map implementation, CoolNewMap, we can do this without making any code changes. Aggregating behavior across concrete types like this is commonplace in the Java / C# world:

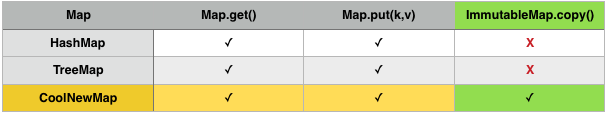

Achieving polymorphism through aggregation over interfaces has long been preferred over inheritance in object-oriented systems. However, what happens if you want to extend the capabilities of an existing concrete type? This is awkward in languages like Java:

While CoolNewMap is free to implement both Map and ImmutableMap, a receiver taking Map as an argument can’t accept it. Even the ImmutableMap interface directly extends Map, we can’t leverage the extra methods in code that looks for Map without narrowing the signature to ImmutableMap (or casting, but let’s pretend we can’t do that for now). Additionally, we can’t make HashMap and TreeMap implement the new interface because even though they’re non-final, we don’t have control over the code. You probably come across this all the time- for example, say we have a database client from a vendor which implements IDBClient:

public final class ProprietaryDBClient implements IDBClient {

@Override

public void doSomething() {

// implementation here

}

}

Even if we somehow have full control over the IDBClient interface, our vendor has given us a component which:

- we can’t change

- is marked as final

Hmm. A common workaround for adding functionality to a sealed type like this is to box it in an outer one:

public class FancyDBShim implements SomeNewInterface, IDBClient {

@Override

public void doSomething() {

client.doSomething();

}

@Override

public void doSomethingNew() {

// new functionality!

}

}

Boxing has a few problems- it incurs a lot of boilerplate code if the interface you’re delegating is large, and more awkwardly, you lose the original type’s identity. Since you’re now ‘spoofing’ the underlying DB client, overriding the wrappers’ equals() method to behave like ProprietaryDBClient.equals() is probably going to cause a world of pain because they’re fundamentally not the same thing.

Some dynamic languages such as Ruby allow for the notion of ‘open’ classes which can be changed whenever it’s deemed necessary. If you’ve worked with any rails apps of a certain size, you’ll probably see this capability abused for the age-old practice of monkey-patching; if you’re really unlucky you might come across crazy stuff like this:

class String

def capitalize

“???”

end

end

And two completely unrelated types:

class A

def print_msg

puts “a”

end

end

class B

def print_msg

puts “b”

end

end

But as long as they both respond to invocations of print_msg, it’s perfectly valid to do this. Since statically typed languages like Java resolve call sites at compile time, we don’t have this luxury. It’s important to note though that dynamic typing doesn’t magically make it any easier to unobtrusively aggregate new functionality onto existing types- the only way Ruby lets you do this is through subtype polymorphism through reopening classes as detailed previously.

This begs the question- is there a way we can add new functionality to existing types while maintaining these invariants:

- The code for original code is left untouched

- The original type’s identity is left untouched

- We don’t extend the original type

This set of constraints has challenged language designers for years but Philip Wadler characterized the challenge as the ‘expression problem’ in the late 90s. He put it like this: “The goal is to define a datatype by cases, where one can add new cases to the datatype and new functions over the datatype, without recompiling existing code, and while retaining static type safety”.

The adding-new-cases bit should be familiar to Java/C# programmers- that’s where we extend our table vertically. The new-functions bit should be familiar to dynamic/functional programmers- that’s where we extend our table horizontally. A language that can do both solves the expression problem.

Clojure’s solution to the expression problem uses the concept of protocols to lay down specifications in a similar way to interfaces while also allowing you to extend existing types without any boxing or recompilation. Taking the example of our awkward DB client again, we might declare a protocol like this:

(defprotocol com.example.SomeNewFunctionality (doSomethingNew [this]))

Since Clojure is not an object-oriented language it has no concept of instance state; instead function definitions take an explicit ‘self’ parameter as the first argument, called this by convention. At this point our SomeNewFunctionality interface looks pretty similar to an equivalent Java interface, and in fact when running on the JVM it will generate a real interface called com.example.SomeNewFunctionality. Unlike a regular interface which can’t just be tacked onto the ProprietaryDBClient class, we can extend it with our protocol:

(extend com.dbvendor.ProprietaryDBClient

SomeNewFunctionality

{:doSomethingNew #(println %)})

If you’re not familiar with Clojure, #(…) is one of the few bits of syntax in the language aside from the macro system: it’s a shorthand for defining an anonymous function where % is the first and only argument- in this case, our implementation just prints the object, implicitly calling Java’s toString() method on the ProprietaryDBClient instance. We can confirm that any newly created instances both conform to the types ProprietaryDBClient and SomeNewFunctionality:

(def instance (…) ; get a DB client instance from somewhere

(isa? ProprietaryDBClient instance) ; => true

(isa? SomeNewFunctionality instance) ; => true

We can also invoke new functionality from SomeNewFunctionality on instance, just like any other function:

(doSomethingNew instance)

Protocols, therefore, solve the ‘case-by-case’ function definition requirement that interfaces or subtype polymorphism on their own cannot. It actually turns out that a pure Java solution was coined a few years ago (paper here), although relying on interface inheritance and generics is nowhere near as expressive as something like protocols which were designed from the ground-up to solve this problem. Above all I’m not trying to extol the virtues of one language over another- but hopefully from this you can see some of the challenges language designers have to face (and are sometimes vilified for!) and also the importance of well-defined, clearly separated and extensible interfaces, regardless of whatever programming language you’re using.

Ready to start getting insights from your applications? Sign up for a Logentries free trial today.